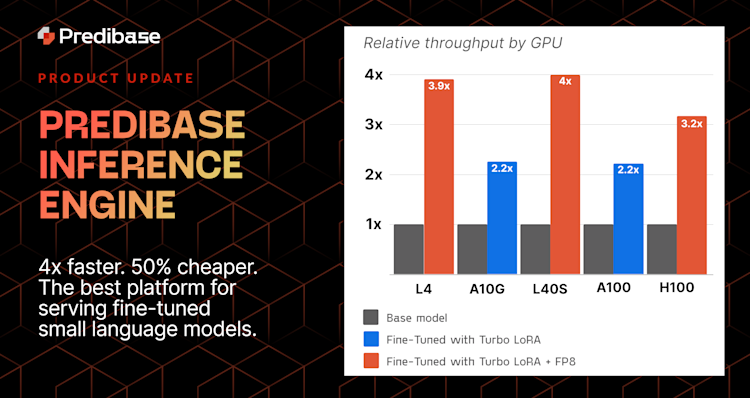

We’ve recently made some major improvements that make fine-tuning jobs 2-5x faster! You can also view and manage your deployments from one place in the UI, and we’ve made training checkpoints more useful in case fine-tuning jobs fail or get stopped. Read on!

Fine-Tuning Jobs Now Take 55-80% Less Time

We’ve been busy implementing a number of optimizations that combine to make fine-tuning jobs roughly 2-5x faster. We’re excited to see customers start iterating faster and spending less time waiting to see their fine-tuning results. These improvements include:

- Shifting all SaaS customers–even free trial users–to A100 GPUs for fine-tuning at no extra cost

- Optimizing various training parameters to saturate GPUs and push training throughput, including setting

batch_sizeto Auto by default

Give fine-tuning a try today for free!

Manage Deployments Through an Intuitive UI

We just launched the new Deployments page where you can view and manage all of your serverless and dedicated deployments in one place! You can now use the UI to:

- Manage existing deployments

- Create new deployments

- View detailed event histories and logs

Try it yourself or read more on our blog.

Fine-Tuned Models Can be Used If They’ve Passed a Checkpoint

If you stop a fine-tuning job–or if a job fails–after already passed a training checkpoint, the model can be used for inference from the latest checkpoint that was saved. This means teams can stop training to test a model mid-way through fine-tuning and see how it’s improving.

Restart Training Jobs from the Latest Checkpoint

If your training job experiences a CUDA out of memory error (CUDA OOM) but has already passed a training checkpoint, you can now simply restart the job from the latest checkpoint rather than from the beginning. We know there’s not much worse than having to restart a job after hours of training, are excited to make this improvement!

Open Source

LoRAX

- Overhauled LoRA memory manager: No more CUDA OOMs, LoRAX will now automatically swap and page adapters when it hits the memory limit of its resource pool.

- New model architecture: Qwen 2!

- Optional max_new_tokens: by default, LoRAX will now generate up to the max_total_tokens, so no more need to mess with this param in most cases.

Ludwig

LoRA Enhancements: Add support for RSLoRA and DoRA by @arnavgarg1 in #3948. To enable, set the corresponding flag to “true” in config (can be used in conjunction):

adapter:

type: lora

use_rslora: false

use_dora: falseOptimal Batches: Add support for eval batch size tuning for LLMs on local backend by @arnavgarg1 in #3957. To enable, set “eval_batch_size” to “auto” in the trainer section:

trainer:

eval_batch_size: autoImproved Experimentation: enable loading model weights from the latest training checkpoint by @geoffreyangus in #3969. To enable, pass “from_checkpoint=True” to “LudwigModel.load()”:

LudwigModel.load(model_dir, from_checkpoint=True)